The EU General Data Protection Regulation – What Is It and How Can Graph Help?

- Blog >

- The EU General Data Protection Regulation – What Is It and How Can Graph Help?

It was a long time coming, but the EU data security and privacy law, known as the General Data Protection Regulation (GDPR), was finalized in 2018 and is in full force across the EU. The negotiating parties — the EU Council, Parliament, and Commission — finalized the GDPR text in December 2015 and officially approved the law in April 2016. The GDPR went into effect in May 2018.

The regulation can be seen as an evolution of the EU’s data rules, the Data Protection Directive (DPD). If your company is new to the EU market, then the GDPR might be a challenge. However, any company that follows IT best practices or industry standards (PCI DSS, NIST CSF, ISO 27001, etc.) shouldn’t find the GDPR too burdensome. One way to describe the GDPR is that it simply legislates a lot of common-sense data security ideas, especially from the ‘Privacy by Design’ school of thought: minimize personal data collection, delete personal data that’s no longer necessary, restrict access, and secure data through its entire lifecycle.

Identifying the data you have and whether it is within compliance is difficult and very time-consuming. Over the course of this article, I’ll examine how graph analytics can help accelerate that process.

DPD 2.0

The EU Data Protection Directive was implemented in 1995 and was superseded by the GDPR in 2018. As technology marched on, some of its shortcomings became more apparent. The internet, the cloud, and big data were just a few of the factors that forced the EU to reconsider its approach to its data security laws. Most importantly, the lack of visibility into the enormous amounts of data stored by organizations and whether it contained sensitive personal data that could be exposed to the public, an insider threat actor, or an external bad actor made it even more necessary for a new, more modern regulation.

One of the main problems with the Directive is that it allowed member countries to write their own legislation using the Directive as a template – ‘transposing’ in EU bureaucratese – and then enforce the rules separately. With the technology disruptions, member countries had different interpretations as to what constituted personal identifiers (MAC addresses? Biometric data?) or who’s responsible when data is in the cloud (the company or the service provider).

Realizing the old data security law had to be revamped, the EU Commission in 2012 started the process of creating new legislation. Its primary goal was a single law ‘harmonization,’ as it’s called — covering all EU countries and a ‘one-stop-shop’ approach to enforcement through a single data authority. The GDPR is not a complete rewrite of the DPD. Instead, it enhances the existing DPD. Interestingly, the current DPD also had as its goal back in the 1990s to become a single law to replace individual national laws. The GDPR looks like it finally realizes that dream — or at least it has come a lot closer.

Ensuring Compliance Through Graph

When you look over what the GDPR brings for individuals, you can see that there’s a breach notification requirement that would force companies to notify the data authorities and consumers when there’s been a data exposure. There’s really nothing like that here in the US. How do you ensure that the data is reviewed regularly with the solutions in place today? You still struggle unless you implement some form of graph technology. Graph technology provides you a deeper, more robust level of visibility across all the security tools deployed by an organization.

Another change is that the penalties for non-compliance will be significant: as much as 4% of a company’s global revenue. To avoid this penalty, each company is required to identify and be able to prove the adherence to each of the components of the GDPR. One could argue that the GPDR is really focusing on multi-nationals, particularly US ones, which earn most of their revenue outside of the EU.

In the GDPR, personal data means any information “relating to a data subject.” A data subject is “an identified natural person or a natural person who can be identified, directly or indirectly, by means reasonably likely to be used” by someone. This somewhat convoluted definition is the language of the original DPD. As with the old rule, the GDPR encompasses obvious identifiers like phone numbers, addresses, and account numbers as well as new internet-era identifiers, such as email, biometrics — anything that relates to the person.

The GPDR also accounts for what’s known as quasi-identifiers, which we’ve written about before in this blog. These are multiple data fields — typical geo and date — that through a little bit of processing and external reference sources you can use to indirectly zero in on the individual.

In any case, personal data is what you are supposed to protect! Data that has been anonymized is not covered by the GPDR, or for that matter in the current DPD.

The GDPR also continues with the DPD’s terminology of data controller and data processor, which are used throughout the law.

A data controller is anyone who determines the “purposes and means of processing of the personal data.” It’s another way of saying the controller is the company or organization that makes all the decisions about initially accepting data from the data subject.

A data processor is then anyone who processes data for the controller. The GDPR specifically includes storage as a processing function, so that considers cloud-based virtual storage as being included.

Putting all this together, the GDPR places rules on protecting personal data as it’s collected by data controllers and passed to data processors. One shortcoming of the DPD was that it left some loopholes for data processors — i.e., cloud providers — that the GDPR now effectively closes off.

Articulating the Articles

Now let’s get into some of GDPR’s legal-ese.

The new law puts in place more specific obligations on data processors and therefore the cloud. This is described in article 28 (processor) and article 33 (security of processing). This also parallels the DPD’s article 17 and effectively says that the cloud provider must protect the security of data given to it by the data controller.

The GDPR adds the ability for someone to directly sue a processor for damages — in the DPD, it was only the data controller that could be held liable.

Article 5 (principles related to personal data processing) essentially echoes the DPD’s minimization requirements: personal data must be “adequate, relevant, and not excessive in relation to the purposes for which they are processed …” But it also says the data controller is ultimately responsible for the security and processing of the data.

Article 25 (data protection by design and by default) further enshrines Privacy by Design ideas. The article is more explicit about data retention limits and minimization in that you must set limits on data (duration, access) by default, and it gives the EU Commission the power to lay down more specific technical regulations later.

More Articles: The New Stuff

There are a few new requirements that directly impact IT. Again, if you’re following common-sense best practices, none of the following should be too much of a burden. Although the DPIAs (see below) are an extra bureaucratic layer that will likely cause some head-scratching (and cursing), the details will probably have to be worked out by the regulators.

Article 30 (records of processing activities) adds new requirements for data controllers and processors to document their operations. There are now rules for categorizing the types of data collected by controllers, recording the recipients for whom the data is disclosed, and specifying an indication of the time limits before the personal data is erased.

Article 35 calls for data protection impact assessments (DPIAs) before the controller initiates new services or products involving the data subject’s health, economic situation, location, and personal preferences — and more specifically data related to race, sex life, and infectious diseases. The DPIAs are meant to protect the data subject’s privacy by, among other restrictions, forcing the controller to describe what security measures will be put in place.

The new breach notification rule probably has received the most attention in the media. Prior to the GDPR, only telecom and ISP service providers had to report breaches within 24 hours under the e-Privacy Directive.

Modeled on this earlier Directive, the GDPR’s Article 33 says that controllers must tell the supervisory authority the nature of the breach, categories of data and number of data subjects affected, and measures taken to mitigate the breach.

Article 34 adds that data subjects must also be told about the breach, but only if the breach results in a high risk to their “rights and freedoms.” If a company has encrypted the data or taken some other security measures that render the data unreadable, then they won’t have to inform the subject.

Article 17 (right to erasure and “to be forgotten”) has strengthened the DPD’s existing rules on deletion and then adds the controversial right to be forgotten. There’s now language that would force the controller to take reasonable steps to inform third parties of a request to have information deleted.

This means that, in the case of a social media service that publishes the personal data of a subscriber to the web, they would have to remove not only the initial information but also contact other websites that may have copied the information. This would not be an easy process!

Finally, a requirement that has received less attention but has important implications is the new principle of extraterritoriality described in Article 3. It says that even if a company doesn’t have a physical presence in the EU but collects data about EU data subjects — for example, through a website — then all the requirements of GDPR are in effect.

This is a very controversial idea, especially in terms of how it would be enforced.

What Is Graph’s Place in GDPR Compliance?

While you now have at least four years of working toward GDPR compliance, we’ve come up with four areas where you can focus your attention and resources to make graph analytics an integral part of your compliance efforts.:

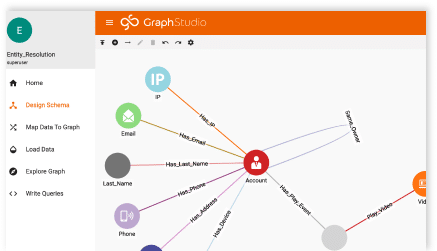

Data Classification

Know where personal data is stored on your system, especially in unstructured formats in documents, presentations, and spreadsheets. The use of algorithms and deep link analytics can help you identify locations of data, data mining efforts, lateral movement of sensitive data, etc. This is critical for both protecting the data and following through on requests to correct and erase personal data.

Metadata

With its requirements for limiting data retention, you’ll need basic information on when the data was collected, why it was collected, and its purpose. Personal data residing in IT systems should be periodically reviewed to see whether it needs to be saved for the future or if it is appropriate for you to delete and or destroy this data. Graph can help you identify the ages of data by looking through the metadata and the information about your data to identify these key characteristics.

Data Access Governance

With data security by design, and default the law, companies should focus on data governance basics. For unstructured data, this should include understanding who is accessing personal data in the corporate file system, who should be authorized to access it, and limiting file permission based on employees’ actual roles – i.e., role-based access controls. The identification of these things through graph analytics and in-database machine learning along with true AI capabilities will allow us to expand your governance.

Monitoring

The breach notification requirement places a new burden on data controllers. Under the GDPR, the IT security mantra should “always be monitoring.” Without graph capabilities, your analysts will continue to struggle to correlate all the alerts they are seeing across all the security tools you have. You’ll need to spot unusual access patterns, lateral movement, relationships, data mining, and so much more. When you identify a breach, you are required to promptly report exposure to the local data authority. Failure to do so can lead to enormous fines, particularly for multinationals with large global revenues.

Automation through Graph with TigerGraph is the key to successfully protecting your data.