Practical and Scalable Graph Embedding Algorithms

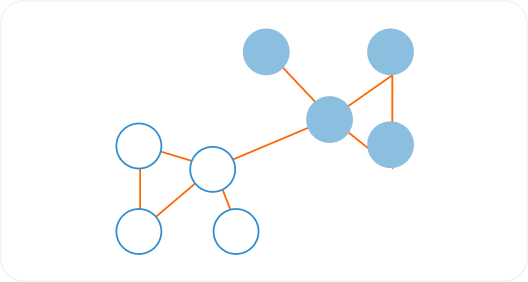

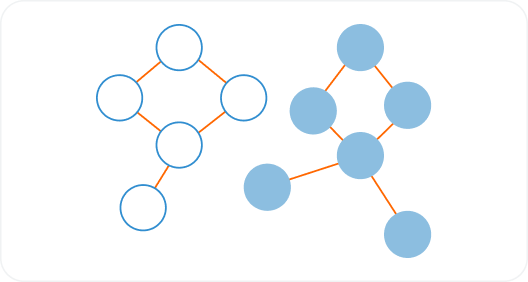

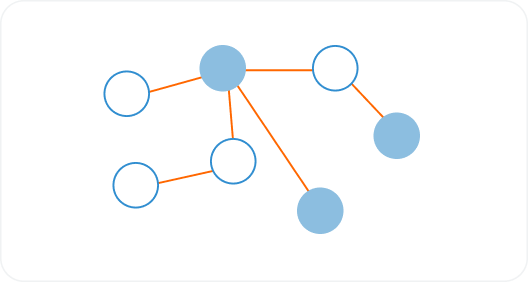

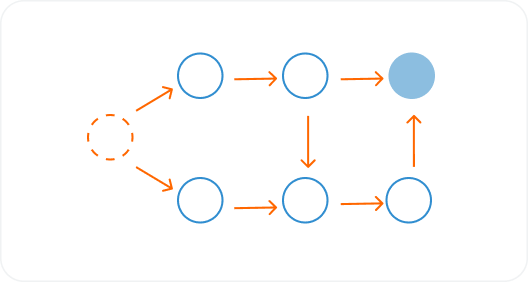

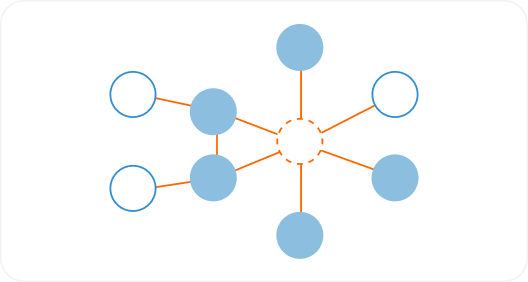

Improve similarity, classification, and link prediction tasks via embedding algorithms which transforming a graph’s neighborhood into a compact vector.

Improve similarity, classification, and link prediction tasks via embedding algorithms which transforming a graph’s neighborhood into a compact vector.