The TigerGraph Native Parallel Graph offers a transformational technology, with significant clear advantages over the most well-known graph database solutions on the market. Despite its comprehensive and well-documented graph database functionality, the current leading solution is considerably slower in comparison. In benchmark tests, TigerGraph can load a batch of data in one hour, while the other solution requires a 24-hour day.

Further, by offering parallelism for large scale graph analytics, TigerGraph supports graph parallel algorithms for Very Large Graphs (VLGs) – providing a considerable technological advantage which grows as graphs inevitably grow larger. It works for limited, fast queries that touch anywhere from a small portion of the graph to millions of vertices and edges, as well as more complex analysis that must touch every single vertex in the graph itself. Additionally, real-time incremental graph updates make it suitable for real time graph analytics unlike other solutions.

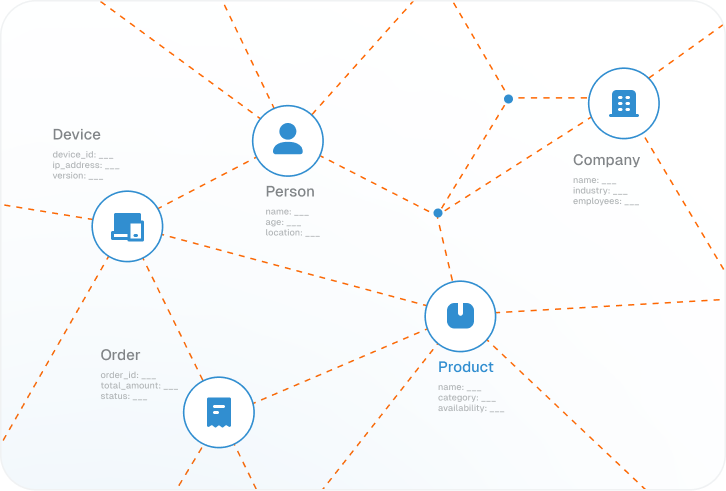

The TigerGraph advantage lies in the fact that we represent graphs as a computational model. Compute functions can be associated with each vertex and edge in the graph, transforming them into active parallel compute-storage elements, in a behavior identical to what neurons exhibit in human brains.

The TigerGraph advantage lies in the fact that we represent graphs as a computational model. Compute functions can be associated with each vertex and edge in the graph, transforming them into active parallel compute-storage elements, in a behavior identical to what neurons exhibit in human brains.