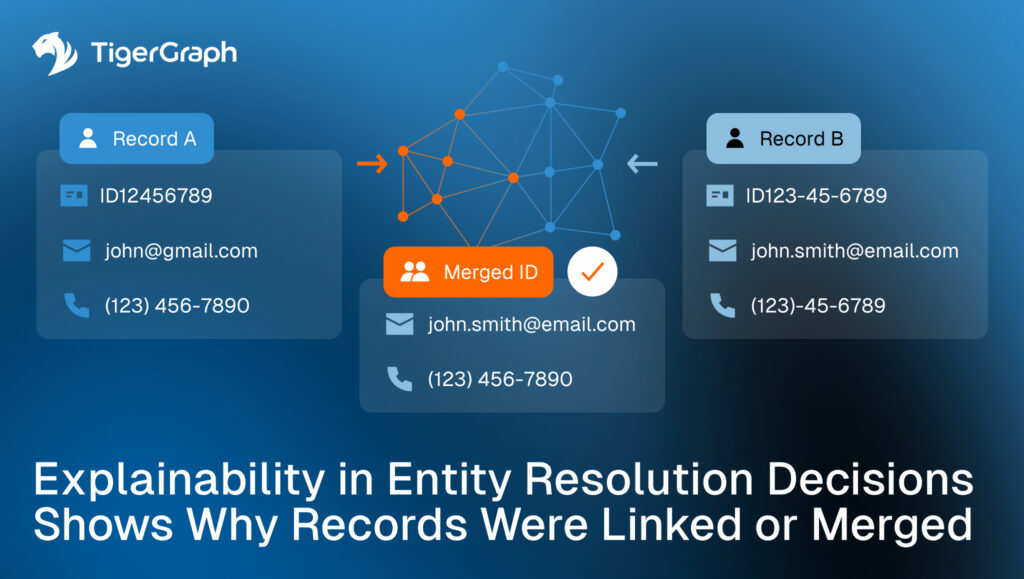

Explainability in Entity Resolution Decisions Shows Why Records Were Linked or Merged

Entity resolution decisions shape everything that follows. Alerts, investigations, risk scores, suppressions, and escalations all depend on whether records were linked, merged or kept separate. Yet in many programs, the reasoning behind those decisions is difficult to retrieve after the fact.

When reviewers, QA teams, or auditors ask why two records were linked, the answer is often incomplete. The response is often limited to a score, a rule trigger or the fact that a merge occurred. The supporting evidence is scattered or no longer visible. This is where explainability breaks down, and where entity resolution becomes harder to defend at scale. This is also where graph comes in.

Key takeaways

- Entity resolution decisions must be explainable long after they occur, not only at the moment of matching.

- Scores and rules alone are insufficient without evidence of preserved relationships.

- Graph-based workflows support explainability by returning connection paths that show how and why records were linked or merged.

To see why explainability becomes such a persistent problem, it helps to look at how resolution decisions are actually used downstream.

Why Explainability Matters in Entity Resolution

Entity resolution answers a deceptively simple question. Do these records represent the same real-world entity?

The operational impact of that answer is significant. Once records are linked or merged, downstream systems assume the decision is correct and durable. Reviews build on it, suppression logic depends on it, and prior outcomes are reused because the identity context appears settled.

Explainability matters because these decisions are reviewed later, often under pressure, and often by people who were not involved in the original match. Without clear evidence, teams are left to reconstruct history rather than evaluate risk.

The challenge is preserving explainability once decisions move into production.

Where Explainability Typically Breaks Down

Most explainability gaps aren’t due to missing data. They come from how resolution decisions are stored and reviewed.

Decisions reduced to scores or outcomes

Similarity scores and match thresholds are useful for prioritization, but they do not explain why records were linked. A numeric value does not show which attributes mattered, which relationships contributed or what evidence was decisive.

Links without preserved context

Records may be linked based on shared devices, addresses or identifiers, but the connection itself is not retained as reviewable evidence. Over time, the rationale disappears even though the link remains.

Merges that cannot be unpacked

When multiple records are collapsed into a single profile, reviewers often cannot see the internal structure that justified the merge. Conflicting attributes coexist without explanation, and the original linkage logic is no longer visible.

Inconsistent explanations across workflows

A record may be linked in one system and unresolved in another. Without a shared, explainable foundation, teams struggle to reconcile differences or explain why outcomes diverged. These gaps do not imply bad matching logic. They indicate that resolution decisions are not being treated as first-class, reviewable artifacts.

These breakdowns point to a gap between how resolution decisions are made and how they are stored for review.

What Explainability Requires in Practice

Explainable entity resolution is about preserving evidence in a way that supports review. At a minimum, teams need to answer three questions consistently:

- Which records were linked or merged?

- What evidence justified that decision at the time?

- How do those records relate structurally, not just statistically?

Meeting these requirements becomes harder as resolution complexity increases and as decisions are reused across time and systems. Teams require a way to consistently retain and revisit the structure behind each decision. They need connected analysis.

What Connected Analysis Adds

Connected analysis improves explainability by shifting the focus from outcomes to structure.

Instead of relying only on similarity scores or rule results, teams can examine the relationships that connect records. These relationships become the explanation.

Connection paths as evidence

A connection path shows the sequence of relationships that link one record to another. This might include shared devices, shared contact information, ownership links or transactional relationships. Preserving that path allows reviewers to see how records were connected, not just that they were.

Structure instead of abstraction

Graph queries return concrete relationships rather than abstract confidence levels. Reviewers can inspect which elements contributed to linkage and whether those elements remain valid.

Reproducible decisions

When the same query logic returns the same paths, decisions become repeatable. QA teams can reproduce results, auditors can follow the evidence and disagreements become resolvable. This approach aligns with the need for explainability without turning resolution into a manual process.

When decision structure is visible, explainability stops being theoretical and starts showing up in daily workflows.

How TigerGraph Fits the Workflow

The operational challenge is preserving explanations as part of the resolution process. TigerGraph supports this by storing entities and relationships directly and returning connection paths as part of query results. This allows teams to:

- Retrieve the relationships that justified a link or merge

- Show how records are connected through shared context

- Apply consistent logic across investigations, QA and audit review

The system does not decide whether records should be linked or merged. Those decisions remain governed by program policy. TigerGraph provides the connected evidence needed to explain and defend them.

When explainability is treated as a quality requirement, resolution outcomes become easier to trust and reuse.

Entity resolution decisions fail when they cannot be explained. Explainability turns entity resolution from a black box into a defensible process. It allows teams to trust past decisions without freezing them in place.

When resolution decisions are explainable, they remain usable. When they are not, they quietly become liabilities.

If explainability is becoming a governance requirement in your program, contact TigerGraph to see how connected, path-level evidence can make entity resolution decisions reviewable, defensible and repeatable at scale.

Frequently Asked Questions

1. What does Explainability Mean in Entity Resolution and Why is it Important?

Explainability in entity resolution means providing clear, reviewable evidence showing why records were linked or merged, ensuring decisions can be validated and trusted over time.

2. Why are Scores and Match Rules Insufficient for Explaining Identity Decisions?

Scores and rules are insufficient because they summarize outcomes without showing the underlying relationships or evidence that justified the decision.

3. How can Organizations Provide Auditable Evidence for Entity Resolution Decisions?

Organizations can provide auditable evidence by preserving relationship paths and connection data that show how records are structurally linked.

4. What Challenges do Teams Face When Trying to Reconstruct Past Resolution Decisions?

Teams struggle because context is often lost, making it difficult to trace which attributes or relationships originally justified a link or merge.

5. How does Relationship-Based Analysis Improve Trust in Entity Resolution Outcomes?

Relationship-based analysis improves trust by making decisions transparent, reproducible, and grounded in visible connections rather than abstract scores.