How Small Relationship Errors Create Big Entity Resolution Failures

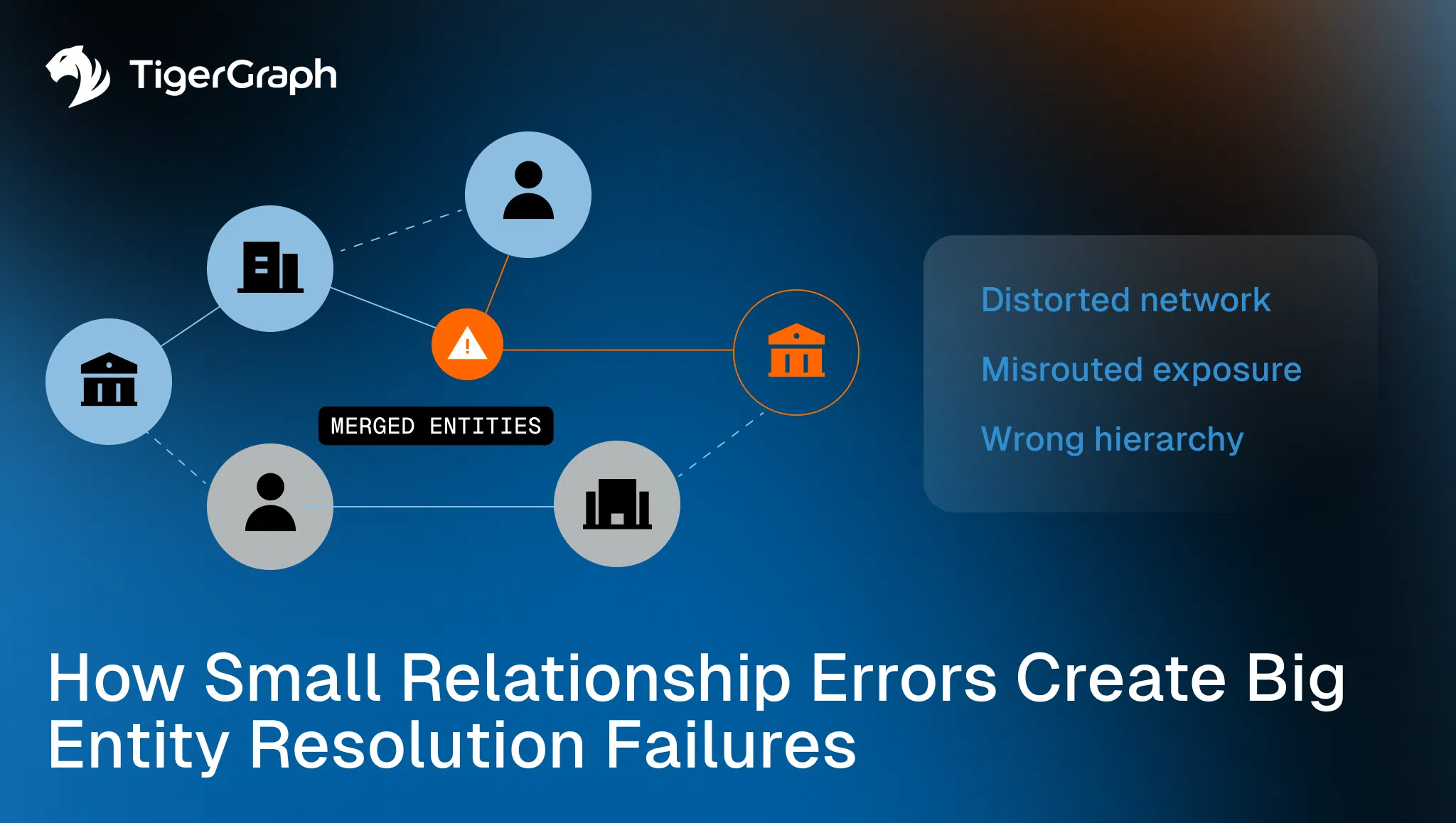

Entity resolution is often discussed as a record-matching problem, with a focus on which records belong together and which do not. But many resolution failures have less to do with the records themselves and more to do with the relationships that connect them.

In graph-based systems, those relationships are often called edges. An edge is simply a recorded connection between two things, such as an account “owned by” a business, a device “used by” a customer, or a transaction linking two entities. These connections define how records relate and how context is assembled.

When even one of those connections is incorrect, incomplete or outdated, it can quietly distort an otherwise reasonable entity view. Over time, small relationship errors compound. Networks become harder to interpret, risk exposure becomes harder to explain, and investigations slow down even when data volume has not materially changed.

This is the cost of bad edges.

Key takeaways

- Incorrect or weak relationships can pollute otherwise accurate entity resolution.

• Missing, duplicated, reversed or inconsistent links undermine confidence in resolved entities.

• Link validation is a structural quality problem, not a matching problem.

• Graph-based workflows make it possible to detect, review and correct bad edges before they propagate downstream.

Why Links Matter as Much as Records in Entity Resolution

Entity resolution relies on two things. Records, and the relationships that connect them. Records describe attributes. Relationships describe context.

Ownership links, device links, account links, transactional links and control relationships all shape how entities are interpreted. They influence exposure calculations, investigation scope, escalation decisions and downstream analytics.

When those relationships are incorrect, incomplete or inconsistent, the resolved entity may look complete while still being structurally unsound.

This is why link quality is not a secondary concern. It directly affects how identity truth is constructed and reused.

How Bad Edges Enter Resolution Workflows

Link failures usually enter the system through normal processes.

Some links are created automatically based on shared attributes. Others come from upstream systems with different modeling assumptions. Still others are added during remediation or investigation and never revisited.

Over time, several recurring failure modes appear.

Missing links

Expected relationships are absent, ownership chains break, accounts appear orphaned, or networks terminate early, even though additional context exists elsewhere.

Duplicated links

The same relationship appears multiple times with slight variations. Connectivity is inflated and network measures and scoring logic become distorted.

Reversed or inconsistent directionality

Relationships that imply control, ownership or flow appear reversed across sources. This breaks hierarchy, exposure logic and review assumptions.

Loops and unresolved cycles

Circular relationships exist but are not recognized or validated. These loops may be legitimate structures or artifacts of poor linkage. Without validation, teams cannot tell which.

Low-quality or weakly supported edges

Links exist but are not supported by surrounding evidence. They remain because nothing explicitly invalidated them, not because they remain correct.

None of these issues requires bad data. They emerge from inconsistent linkage logic over time.

Why Bad Edges Quietly Pollute Resolution

Once a relationship exists, it tends to persist. Detection systems, investigations and analytics treat edges as truth unless explicitly challenged. As a result, bad edges influence more than just resolution.

They affect exposure calculations, which entities are reviewed together, how far investigations expand and which alerts fire or suppress.

Because the impact is distributed, no single workflow clearly owns the failure. The result is gradual degradation rather than a visible break.

This is why link validation is often overlooked until outcomes become difficult to explain.

What Link Validation Actually Means in Practice

Link validation is not about constantly rechecking every relationship. It is about ensuring that relationships behave coherently within their surrounding context.

In practice, this means asking a small set of structural questions.

- Does this relationship align with nearby evidence?

- Does it behave consistently across systems and workflows?

- Does it strengthen or weaken when new evidence arrives?

- Does it create connectivity that makes sense for the entity’s role and lifecycle?

These are structural questions, not matching questions.

Answering them requires visibility into how relationships interact, not just whether two records share attributes. This is where connected analysis is valuable.

What Connected Analysis Adds

Connected analysis makes link quality observable. Instead of evaluating edges in isolation, teams can evaluate how each relationship behaves inside the network it connects.

This supports several operational capabilities.

Detection of missing and broken paths

Graph-based analysis relies on traversal rather than table joins. Traversal means following relationships step by step across connected entities to understand how records are actually linked.

By walking these paths, teams can see where expected connections stop early, diverge or fail to appear at all. These breaks often indicate missing links, incomplete data ingestion or relationships that were never created despite supporting evidence elsewhere in the network.

This shifts link validation from spot-checking individual relationships to verifying whether the network behaves the way it should.

Identification of duplicate and inconsistent relationships

Graph search can surface repeated edges, conflicting directions or overlapping structures that inflate connectivity.

Validation through neighborhood consistency

Edges can be evaluated based on how well they align with surrounding relationships. Weak or anomalous links stand out when viewed in context.

Reviewable evidence for correction

When a link is questioned, the surrounding path provides the evidence needed to explain why it should be corrected, removed or retained.

This shifts link validation from guesswork to reviewable analysis.

How this Prevents Downstream Resolution Failures

Validated links support stable entity resolution. When relationships are reliable, entities are easier to interpret. The investigation scope becomes more predictable, and exposure logic behaves consistently across cases.

More importantly, link validation prevents small errors from compounding.

Instead of allowing bad edges to quietly influence scoring, alerting and escalation, teams can identify and correct them early, before they distort broader workflows.

This reduces repeated remediation, inconsistent outcomes, and investigation dead ends tied to broken or polluted networks.

How TigerGraph Fits the Workflow

The operational challenge is validating relationships at scale while preserving reviewability.

TigerGraph supports this by enabling:

- Direct storage of relationships as first-class data

• Multi-hop traversal to evaluate how links behave in context

• Graph search to identify duplicates, loops and inconsistencies

• Query outputs that preserve paths for QA and audit review

The system does not decide which links are correct. It provides the structural visibility teams need to apply program-defined rules consistently and defensibly.

Practical Steps to Improve Link Validation

Programs seeking to reduce resolution failures tied to bad edges typically start with a few actions.

- Define which relationship types require validation and review

• Monitor for missing, duplicated, or inconsistent links as quality signals

• Require path-based evidence when links are added, corrected or removed

• Prioritize remediation where link issues affect exposure, escalation or reuse

Link validation works best when treated as an ongoing quality discipline, not a one-time cleanup effort.

Conclusion

Bad edges pollute resolution quietly. They distort networks, suppress signals,and undermine confidence in outcomes without triggering obvious errors. By making relationships reviewable, comparable and structurally visible, teams can prevent small linkage issues from becoming systemic failures. Clean edges do not guarantee perfect resolution, but without them, resolution cannot remain trustworthy. Contact TigerGraph to see how graph traversal and path-based evidence help teams validate relationships, identify bad edges, and maintain accurate, reviewable entity resolution.

Frequently Asked Questions

1. What Role do Relationships Play in Entity Resolution for Fraud and AML Detection?

Relationships connect records such as customers, accounts, devices, and transactions, providing the context needed to interpret identity networks. In fraud and AML detection, entity resolution relies on these connections to understand how entities interact. If relationships are missing, duplicated, or incorrect, the resolved identity network may appear complete but still produce misleading conclusions about risk exposure or ownership structures.

2. How do Incorrect Relationships Cause Failures in Entity Resolution Systems?

Incorrect relationships—often called “bad edges” in graph systems—can distort the structure of identity networks. A single inaccurate connection between entities can propagate through the network, causing investigators and detection models to treat unrelated entities as connected or overlook important relationships. Over time, these small errors compound and lead to inconsistent risk scoring, investigation confusion, and unreliable entity profiles.

3. Why are Relationship Errors Difficult to Detect in Fraud and AML Data Environments?

Relationship errors are difficult to detect because they rarely trigger obvious system failures. Instead, they gradually distort how networks behave. Investigators may encounter unexplained investigation paths, inconsistent exposure calculations, or alerts that expand unexpectedly. These symptoms often originate from hidden relationship errors that only become visible when the surrounding network structure is examined.

4. How can Graph Analytics Help Identify Relationship Errors in Financial Crime Investigations?

Graph analytics helps identify relationship errors by evaluating how connections behave within the broader network of entities. Investigators and data teams can traverse relationships across accounts, devices, businesses, and transactions to identify missing links, duplicated relationships, reversed connections, or suspicious cycles. This network-level visibility allows teams to detect structural inconsistencies that traditional record-based analysis often misses.

5. Why is Relationship Validation Important for KYC, Fraud, and AML Programs?

Relationship validation ensures that identity networks accurately reflect how entities interact in the real world. In KYC, fraud, and AML programs, decisions about exposure, ownership, and suspicious activity depend heavily on these connections. Validating relationships helps prevent inaccurate risk assessments, improves investigation clarity, and ensures that identity resolution results remain trustworthy and defensible during regulatory reviews.