Should Graphs Power AI Before or After the LLM?

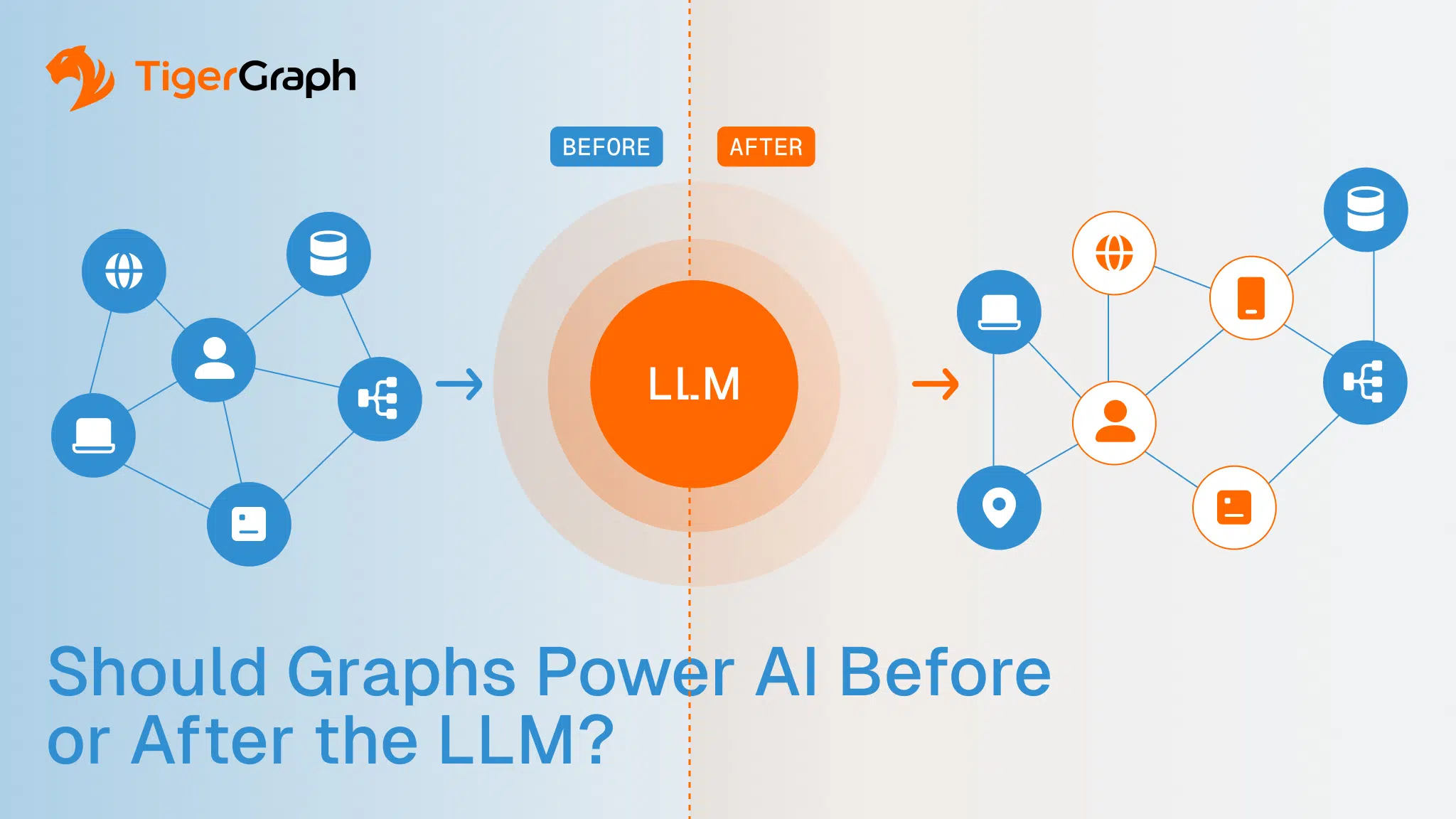

Enterprises designing modern AI systems eventually run into the same architectural crossroads. They see that a graph can strengthen their AI. Should the graph be used in the retrieval phase, shaping the context the model receives before the LLM generates a response, or in the validation phase, to verify and refine the response?

This decision determines several things, including:

- How will the system retrieve information?

- What can the model safely rely on?

- How confidently can an organization use AI inside high-stakes environments?

The underlying issue is simple. LLMs are powerful language generators, but they still guess. They need structure around them to stay aligned with reality. The graph is that structure.

In practice, the most stable architectures use the graph twice.

- A pre-LLM graph layer gives the model a grounded understanding of the domain so retrieval provides the right entities, relationships and constraints.

- A post-LLM graph layer checks the model’s output against authoritative data before it is used anywhere else.

One step guides the model; the other protects the organization.

Optimally, enterprises will choose to combine GraphRAG, graph and LLM workflows, hybrid search, and structured context. This way, they get an AI system that behaves consistently, explains its logic, and can reason across complex, multi-step relationships.

This just scratches the surface of the overall concept. The details that follow break down precisely how each stage works and why both matter.

How Graphs Strengthen AI Before the LLM?

Using the graph before the model changes how the entire retrieval process works.

Graph-powered retrieval ensures that the LLM starts with entity-level grounding, multi-hop context and verified relationships. This is central to GraphRAG, where the graph assembles connected facts that guide the LLM.

Structured Context Improves Retrieval Quality

Traditional RAG pipelines retrieve information by comparing vector proximity. A vector is a numerical representation of text or other complex content. When a model converts a sentence or document into a vector, it captures the meaning of that text as a position in high-dimensional space. Two pieces of text that mean similar things end up close to one another in that space. This allows the system to find passages that are semantically related, even if they do not share exact keywords.

This approach is useful for language, but it introduces a critical limitation. Semantic similarity does not guarantee greater understanding. A vector can retrieve content that generically is often said together with or in place of the input but has no connection to the actual entities, relationships or constraints inside the enterprise.

A pre-LLM graph layer prevents this problem.

Instead of allowing retrieval to depend solely on linguistic similarity, the graph anchors the process in the domain’s real structure. It evaluates how entities and events connect, how information moves across multi-hop pathways, where domain boundaries exist and which relationships are strong or weak.

Retrieval shifts from “this text sounds similar” to “this information is structurally valid.”

The result is a context package based on verified knowledge rather than probabilistic guesses. The LLM receives information that reflects how the business domain operates, not just how the language appears.

Hybrid Search Aligns Meaning with Structure

A pre-LLM graph layer enables hybrid search, a retrieval method that combines two complementary strengths.

Vectors identify information that is semantically similar, while the graph determines whether that information is contextually correct. The result is a retrieval that reflects both the language of a query and the reality of the enterprise system.

To understand this workflow, it helps to clarify what the graph provides.

A graph is a data model built from two elements: entities (represented as nodes) and the relationships between them (represented as edges). These connections are stored explicitly. They do not need to be inferred, reconstructed or guessed.

A graph database uses this structure to represent how customers, accounts, devices, suppliers, transactions, facilities or processes interact across the organization. This structure is essential for retrieval, because enterprise data does not form a flat list of facts. It forms a network of dependencies.

A graph database reflects that network directly. It shows which entities connect, how they connect and how far those connections extend. It also records constraints, hierarchies and multi-hop pathways that are not visible in text.

Hybrid search uses both systems at once.

• The vector layer identifies information that aligns with the meaning of the query.

• The graph layer identifies information that aligns with the structure of the domain.

The two can be used as independent sources, or one can feed the other.

For example, if a vector brings back material that sounds relevant but conflicts with known relationships, the graph filters it out. If an entity appears semantically related but is not connected to the correct accounts, devices, suppliers or processes, the graph rejects it. The remaining content reflects both semantic relevance and structural truth.

This alignment keeps the LLM focused on information that fits the organization’s data model, business logic, and operational reality. The model begins its reasoning process with context that is meaningful, connected, and authoritative.

Context Injection Adds Domain Awareness

Pre-LLM graph workflows take the next step by assembling the specific slice of the enterprise graph that is relevant to the query.

Instead of handing the LLM a loose set of documents, the system delivers a structured snapshot of the domain. This includes the entities involved, how they link together, the rules that shape their behavior and the multi-hop pathways that give those relationships meaning.

This is what makes context injection so effective.

The model works from a coherent representation of the environment it is reasoning about, not disconnected text fragments that happen to share keywords or phrasing.

For questions involving lineage, dependencies, shared identifiers, or multi-entity interactions, this structure prevents the model from drifting or misinterpreting the task. It begins with a grounded understanding of the domain instead of having to guess at the shape of it.

Retrieval Augmented Generation Becomes More Reliable

When the graph governs what information reaches the model, retrieval augmented generation becomes far more stable. The graph ensures that the LLM receives only verified, connected, and accurate data.

This matters in domains where context must be precise.

• A financial transaction has meaning only when linked to its accounts, devices and merchants.

• A supply chain disruption can be understood only by following how it flows across upstream and downstream dependencies.

• A clinical, operational, or logistics workflow only makes sense when evaluated in its full sequence.

Shallow retrieval pulls information that feels relevant. Structural retrieval pulls information that is relevant. The more the domain depends on multi-hop reasoning, the more essential this becomes.

Graph Validation Ensures Structural Integrity

After the model generates an answer, the graph shifts into verification mode. LLMs do not know whether the entities they mention are real or whether the relationships they describe are possible. They generate language, not logic.

A post-LLM graph layer validates output against authoritative structure, identifying:

• nonexistent or mismatched entities

• incorrect or impossible relationships

• reasoning paths that violate known constraints

• contradictions with ground truth

For any environment where accuracy and trust matter, this validation step is non-negotiable.

Reducing Hallucinations with Verified Context

Hallucinations are not rare edge cases. They are a natural behavior of probabilistic models.

Post-LLM graph checks mitigate this by comparing the model’s claims to the knowledge graph. If a claim cannot be validated, the system can correct it, regenerate it under tighter constraints or block it entirely.

This controls risk in workflows, which is essential when incorrect answers carry real consequences.

Enforcing Policy, Compliance and Domain Constraints

LLMs have no internal understanding of compliance boundaries or business rules. A post-LLM graph layer enforces those rules, ensuring that generated answers respect:

• lineage and dependency constraints

• internal policy requirements

• access controls

• regulatory obligations

This creates an audit-ready workflow. It also maintains organizational trust.

Disambiguation for Entity Reliability

Models frequently confuse people, accounts, businesses or devices that share similar attributes. A post-LLM graph layer resolves these ambiguities by using verified relationships to select the correct entity. Without this safeguard, a system may produce an answer that is fluent but tied to the wrong object.

The Case for Using Graph Before and After the LLM

Using the graph on only one side of the LLM captures part of the benefit. Using it on both sides creates a system that is far more stable, grounded, and predictable.

Why this dual model works best:

• Pre-LLM graphs supply the structured context.

• Post-LLM graphs supply structural verification.

• Together, they eliminate retrieval uncertainty and output uncertainty.

This two-phase model is a preferred pattern for identity, fraud, operations, customer intelligence, regulatory workflows and risk analysis.

TigerGraph’s Role in Graph in LLM Systems

TigerGraph is built for environments where AI needs structure, scale and explainability. Its architecture supports both sides of the graph–LLM workflow, with grounding retrieval before the model generates an answer and validating the output afterward.

Real-time multi-hop traversal

TigerGraph evaluates multi-step relationships at scale. It follows customers to accounts to devices, and suppliers to components to facilities, and it does this without flattening or approximating the structure. This makes it suitable for GraphRAG, hybrid search and any retrieval process that depends on accurate relationship mapping.

Schema-driven governance

TigerGraph enforces a consistent data model. Entities, attributes and relationships are defined once and interpreted uniformly across applications. This prevents drift and ensures that every retrieval or validation step aligns with the organization’s business logic.

Entity resolution at scale

Identity data is rarely clean or consistent. TigerGraph unifies fragmented records across systems. This creates reliable entities and produces cleaner retrieval with fewer false positives, as it’s backed by more accurate reasoning.

Parallel computation for large datasets

Its graph engine performs parallel computation across highly connected datasets. This makes it possible to incorporate graph context and graph guardrails into real-time workflows rather than relying on batch processes.

Low-latency integration with LLM workflows

TigerGraph is engineered to assemble structured context before the model runs, and to validate claims after the model responds. It makes hybrid retrieval and post-generation verification practical at scale.

A foundation for explainable, grounded AI

By supplying real structure before generation and verifying output afterwards, TigerGraph enables graph-aware AI systems that remain accurate, traceable and aligned with real operational behavior.

Next Steps: Build AI That Operates with Structure and Clarity

Organizations exploring GraphRAG, hybrid search or graph-powered AI can evaluate TigerGraph’s platform to explore how its real-time graph traversal, schema-driven modeling and enterprise-grade governance strengthen AI reliability.

To see how these capabilities apply to your environment, connect with the TigerGraph team and review reference architectures, technical resources and deployment options tailored to your use case.

Frequently Asked Questions

1. Should graphs be used only for retrieval or also for validating AI outputs?

Graphs are most effective when used in both stages. Before generation, they ground retrieval in real entities and relationships. After generation, they validate that the AI’s claims align with authoritative structure, reducing hallucinations and risk.

2. How does graph-based context change what an LLM can safely rely on?

Graph context constrains the model to verified entities, dependencies, and rules. Instead of relying on probabilistic language patterns, the LLM reasons within a structured domain that reflects how the organization actually operates.

3. What risks increase when graphs are added only after an LLM generates a response?

If graphs are used only for post-generation checks, the LLM may reason from incomplete or misleading context. This increases the likelihood of flawed logic paths that must be corrected later instead of being prevented upfront.

4. Why is hybrid search critical when graphs are used before the LLM?

Hybrid search combines semantic similarity with structural validation. Vectors surface meaning, while graphs confirm correctness. Together, they ensure the AI retrieves information that both sounds relevant and is operationally valid.

5. In which enterprise scenarios is a dual graph–LLM approach most important?

High-stakes domains like fraud detection, identity resolution, regulatory compliance, supply chain analysis, and customer intelligence benefit most. These workflows depend on multi-hop relationships where both retrieval accuracy and output verification are essential.