The Missing Layer in Your AI Stack

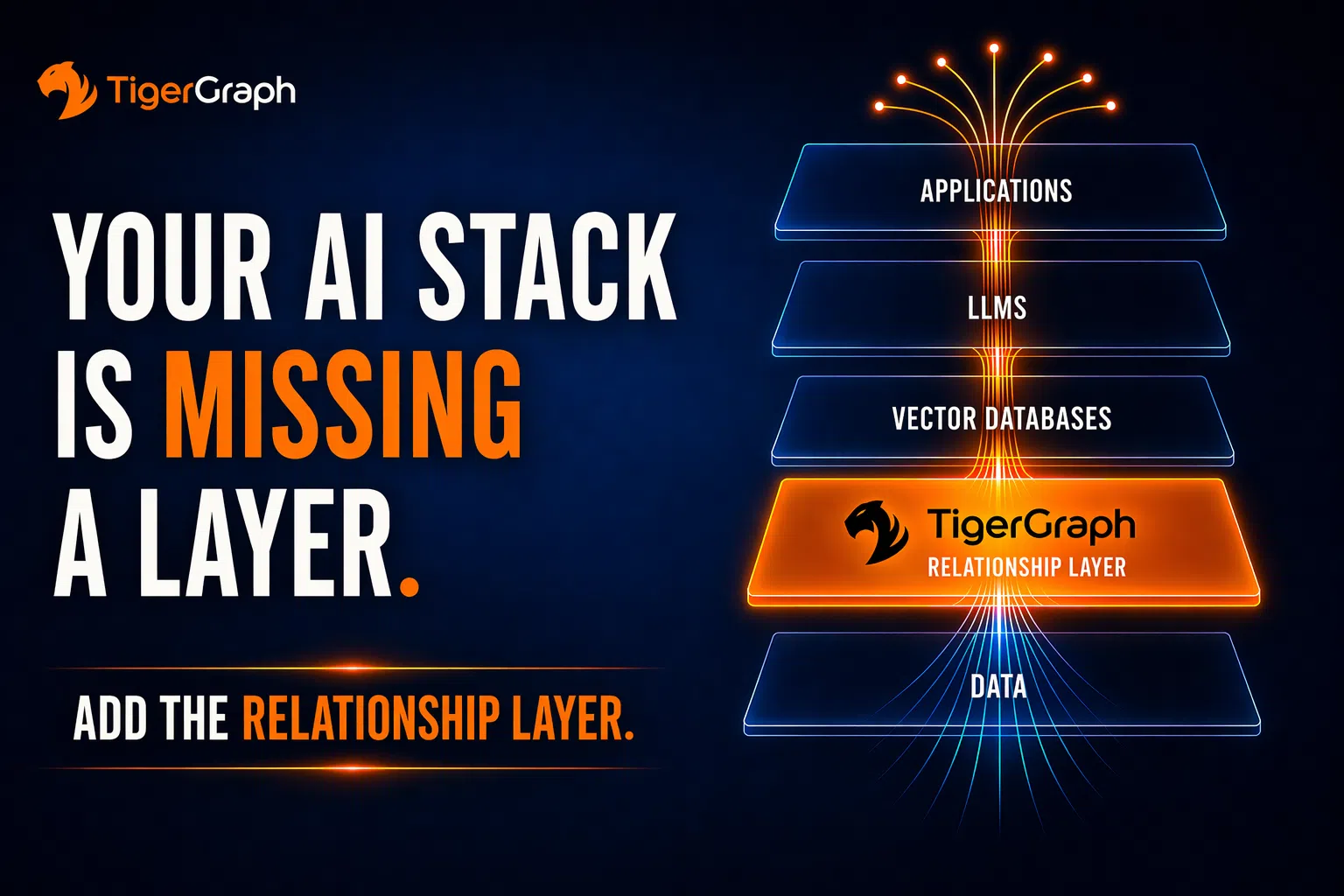

Most AI stacks look complete. On paper, they have everything: 1. Applications; 2. Large language models (LLMs), 3. Vector databases, and 4. Enterprise data. It feels like a full system. It isn’t.

Because what looks complete is missing a critical form of understanding, one that no amount of data, retrieval, or model size can compensate for. Similarity finds data. Relationships explain it. And that distinction is where most AI systems break.

What’s Missing

What’s missing is not more data. It’s not a better model. It’s not more retrieval. It’s a layer that understands how things connect, not just how they look in isolation. Call it what it is:

A Relationship Runtime.

A layer that computes connections directly, instead of asking the model to infer them on every request.

Why Similarity Isn’t Enough

Vector systems retrieve based on similarity. That works for locating information that appears related to a query. It is effective for search. But enterprise decisions are not driven by similarity. They are driven by structure.

Similarity tells you what is near. Relationships tell you what matters.

A transaction is not risky because it looks like another transaction. It is risky because of how it connects to other accounts, to shared devices, and to coordinated behavior over time. Those are not similarity problems. They are relationship problems. In practice, most AI stacks look like this: data → vector search → model → application. What’s missing is the step in between, where relationships are computed, not guessed.

Where the Stack Breaks

When relationships are not explicitly represented, the system does not fail immediately. It compensates. The LLM is forced to: infer connections between entities, reconstruct context from fragments, and resolve ambiguity across loosely related documents,. This work is pushed upward into the model because there is nowhere else for it to happen. And that is where the inefficiency begins.

What the System Actually Does Without Relationships

Consider a simple question: “Is this transaction fraudulent?” A similarity-based system retrieves transactions that look alike, users with similar patterns, and documents describing related behavior. But similarity does not assemble a network. So, the model is forced to construct one. It has to: infer that two accounts are connected through a shared device, recognize that the same device appears across multiple identities, and identify that those identities form a coordinated pattern over time

None of that structure is given. It is reconstructed from fragments inside the model. On every request. That is not retrieval. That is repeated graph traversal happening in the most expensive layer of the system.

The Real Consequence

Without a relationship layer: context is fragmented because connections are implicit, signals are incomplete because paths are not surfaced, and decisions degrade because the system never sees the full structure. The system compensates in the only way it can: 1. It retrieves more, 2. Processes more, and 3. Guesses more.

More context doesn’t create clarity. It creates more work. And that work shows up during inference, where it is most expensive and least scalable.

The Hidden Cost of Missing Relationships

When relationships are not resolved ahead of time, they are resolved during inference. That means more tokens to represent possible connections, more attention computation to evaluate them, more latency before an answer is generated, and more GPU time consumed per request. But more importantly, it means the same work is repeated. Every query reconstructs the same relationships. Every request pays for the same reasoning. Over and over again.

If relationships aren’t resolved before the model runs, the model pays to figure them out every time.

Why This Becomes Inevitable

This is not an optimization problem. It is an architectural one. As systems scale: more data is integrated, more workflows depend on AI, and more decisions are automated. The amount of relationship inference pushed into the model grows continuously. And with it: cost accelerates, latency becomes unstable, throughput declines, and infrastructure demand increases. Most teams don’t hit a model limit. They hit a context limit first.

Where TigerGraph Becomes Necessary

This isn’t about adding a capability. It’s about moving a class of computation to where it belongs. Today, relationship traversal is happening inside the model, where it is: expensive, repeated, and opaque.

That is the mistake.

TigerGraph moves that computation into a system designed for it. By representing data as a graph, it resolves entities and relationships explicitly and can traverse multi-hop connections in real time. Instead of sending large volumes of loosely related data into the model, it returns a precise, connected structure of the relevant facts. The model no longer has to discover how things relate. It is given directly.

Stop asking the model to discover the graph. Give it the graph.

What disappears is not just excess context. It is entire classes of unnecessary computation: repeated relationship inference, attention over irrelevant data, and redundant reasoning cycles

From Missing Layer to System Efficiency

This is why the Relationship Runtime matters. Without it, the system relies on expensive, repeated inference to reconstruct relationships. With it, relationships are resolved once efficiently and reused across queries. That shifts work out of the most expensive layer in the stack and into a system purpose-built for it. And that is what makes the entire system scalable.

The Real Takeaway

Most AI stacks are not incomplete because they lack models or data. They are incomplete because they lack a way to compute how data connects. Without that, every request rebuilds the same relationships from scratch inside the most expensive layer in the system. That doesn’t just increase cost. It defines it.

The systems that scale won’t be the ones with the largest models. They will be the ones that stop doing the same work twice.